Housam Babiker

University of Alberta

News

- June 1, 2026: Two papers on interpretability accepted in ECML-PKDD 2026, Italy.

- April 7, 2026: Our work on offline planning and distillation for TRMs is accepted in ACL 2026, US.

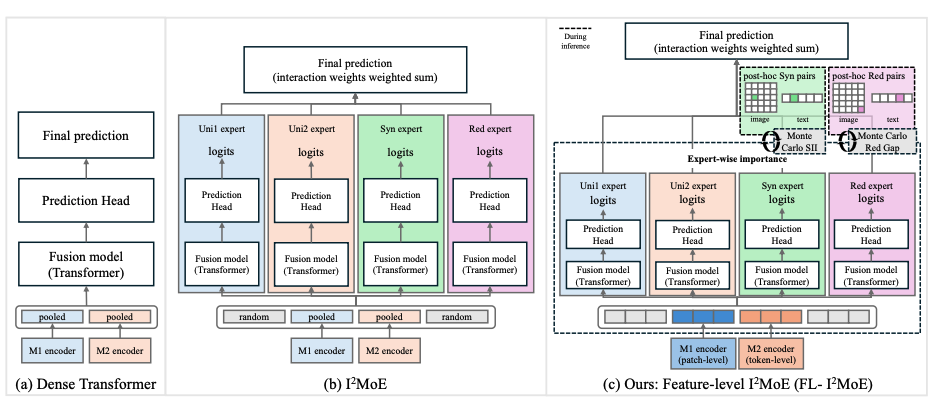

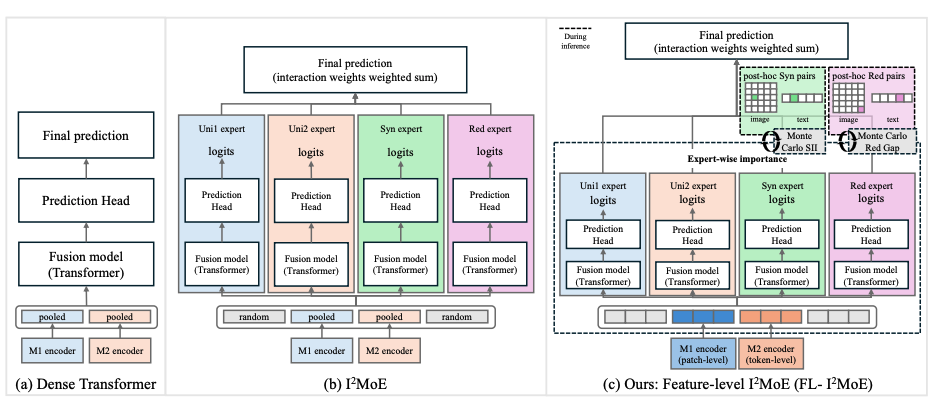

- March 31, 2026: Our paper on feature interaction in multimodal transformers is accepted in ICPR 2026, France. [Paper]

- March 22, 2026: A new preprint on feature interaction in multimodal transformers is available. [Paper]

- Feb 2026: Our paper on multi-task learning was accepted to LREC 2026, Spain . [Paper]

- Jan 2026: PC member for ACL 2026 .

- Jan 2026: A new preprint on multi-task learning is available. [Paper]

- Oct 2025: A new preprint investigating the explainability and interpretability of large language models (LLMs) is available. [Paper]

- July 2023: Our work on learning intermediate representations for hierarchical explanations was accepted to ECAI 2023.

- July 2023: Our work on explaining RL agents for autonomous driving actions is accepted in ITSC 2023.

- March 2023: Defended the PhD thesis.

- August 2022: Our paper entitled "Locally Distributed Activation Vector for Feature Attribution" was accepted to COLING 2022 in Gyeongju, South-Korea.

- June 2022: Our paper entitled "Neural Networks with Feature Attribution and Contrastive Explanations" was accepted to ECML-PKDD 2022 in Grenoble, France.

- May 2022: Presented our poster: Self-explainable models in natural language processing in AI Week 2022 in Edmonton, Canada.

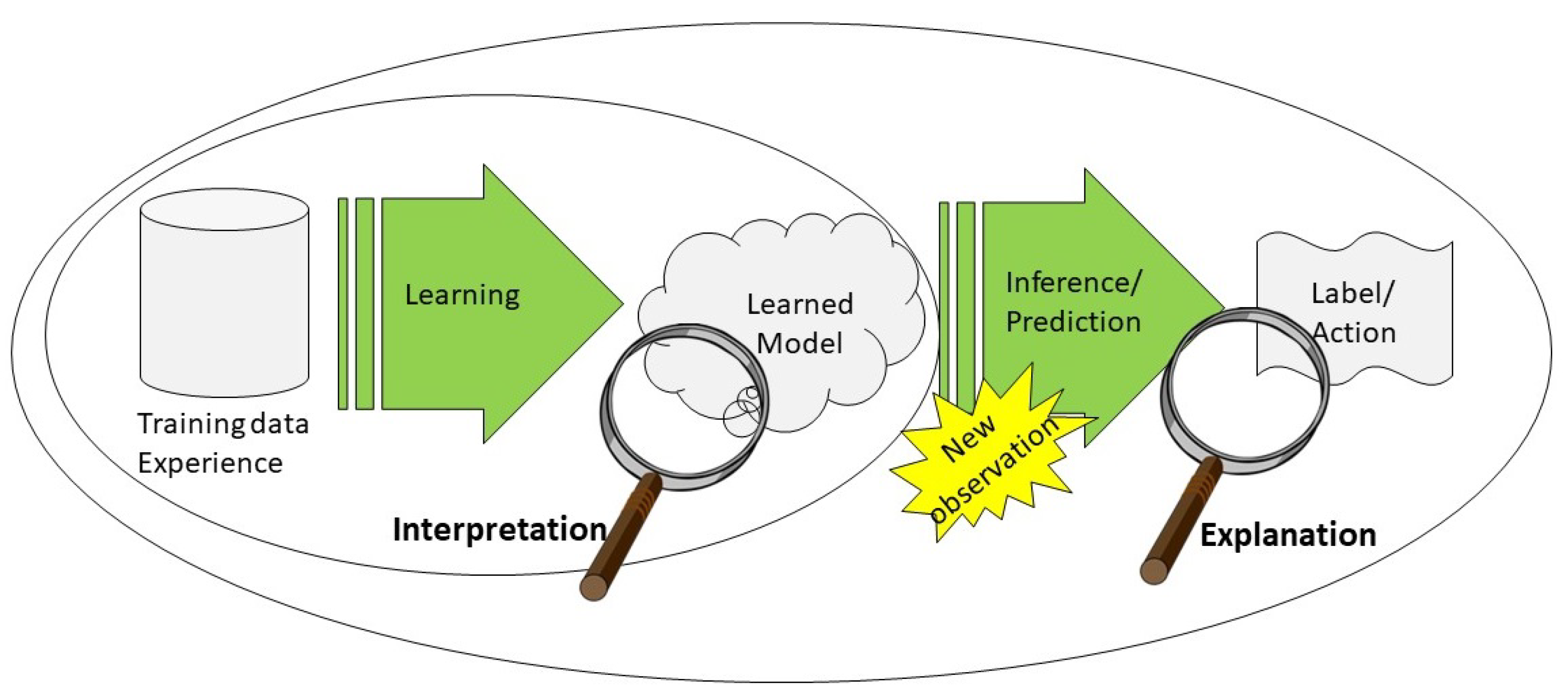

- Nov 2021: Our paper entitled "A Multi-Component Framework for the Analysis and Design of Explainable Artificial Intelligence" was published in the Machine Learning and Knowledge Extraction journal.

Research

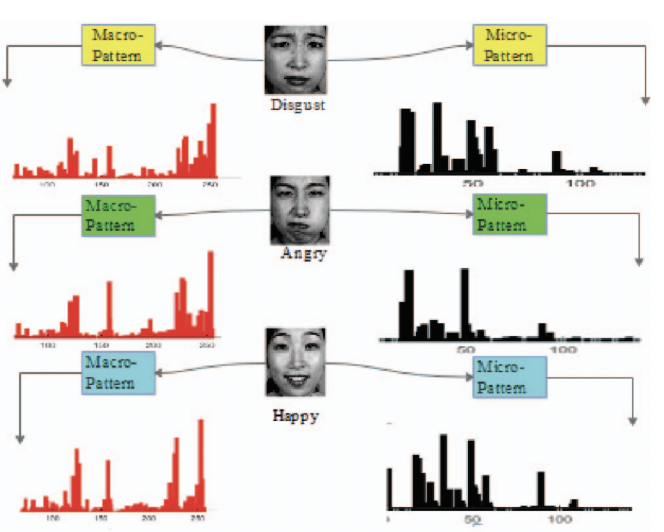

Research on making deep learning and deep reinforcement learning more interpretable and explainable is receiving much attention. One of the main reasons is the application of deep learning models to high-stake domains. Also, using explanations as a proxy for debugging models so that we could improve performance, learn new insights, and also use explanations as a proxy for compression and distillation. In general, interpretability is an essential component for deploying deep learning models. In my doctoral research, I worked on explainability and robustness of deep learning, primarily in NLP and computer vision. My general research interests include Explainable AI, Deep Learning, and Foundation Models for Decision Making.

Research Articles

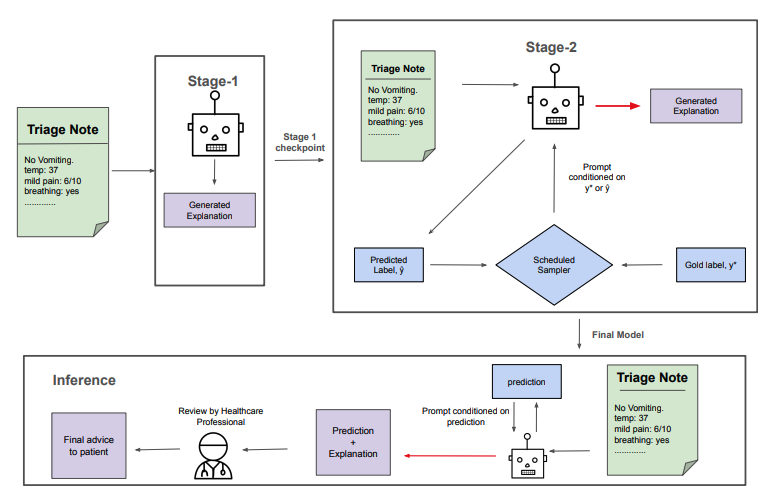

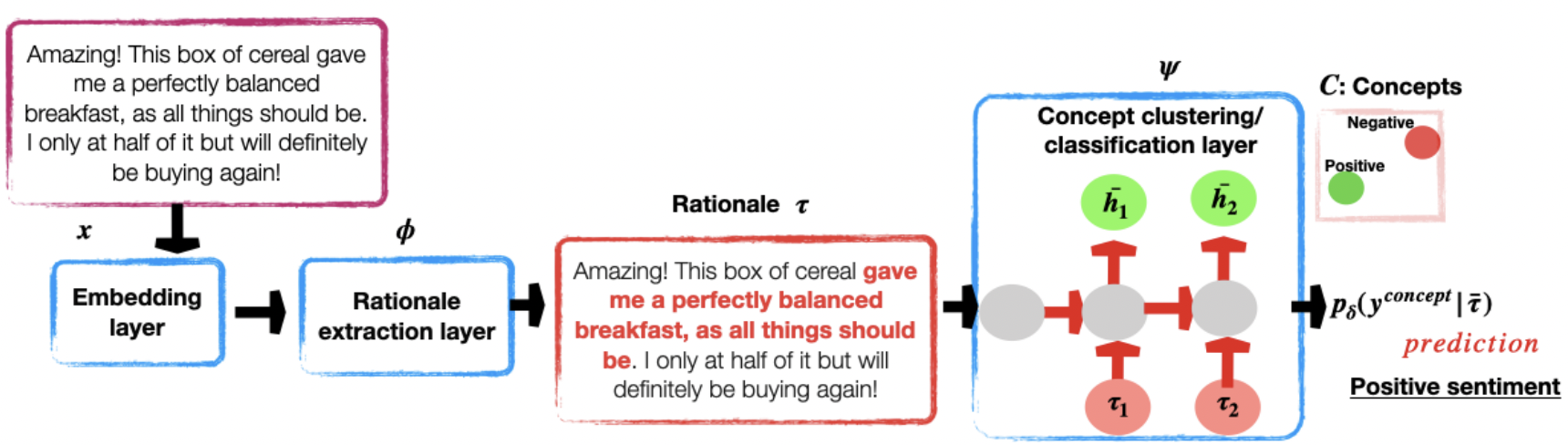

From Intermediate Representations to Explanations: Exploring Hierarchical Structures in NLP

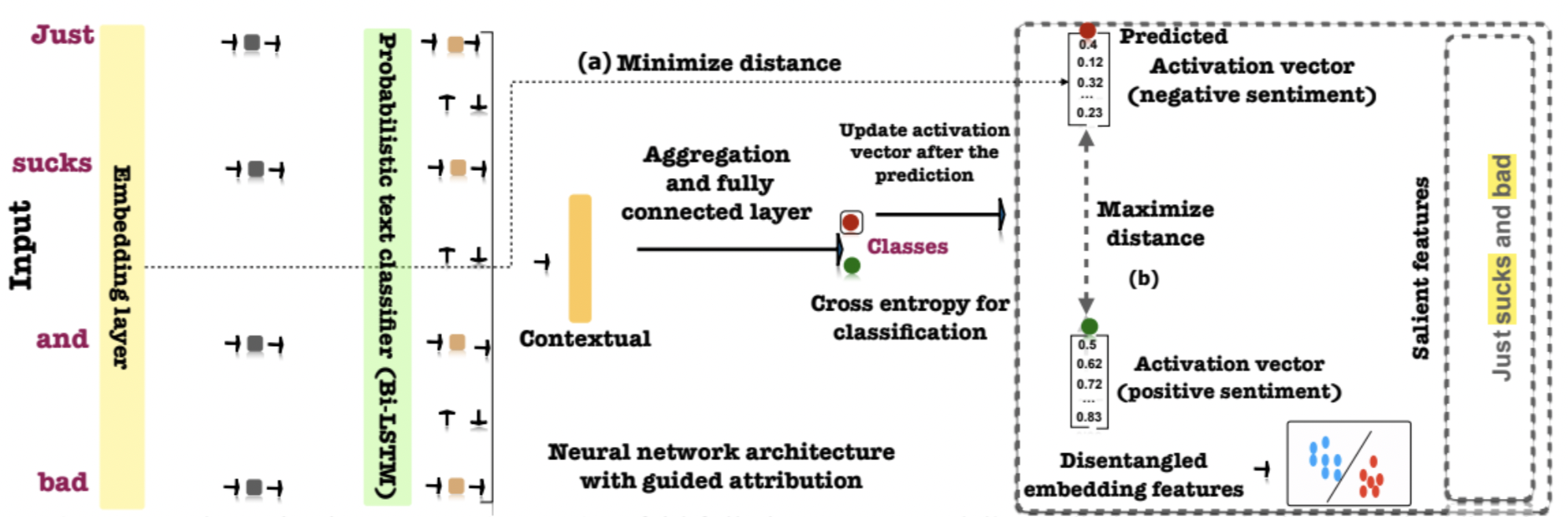

Neural Networks with Feature Attribution and Contrastive Explanations

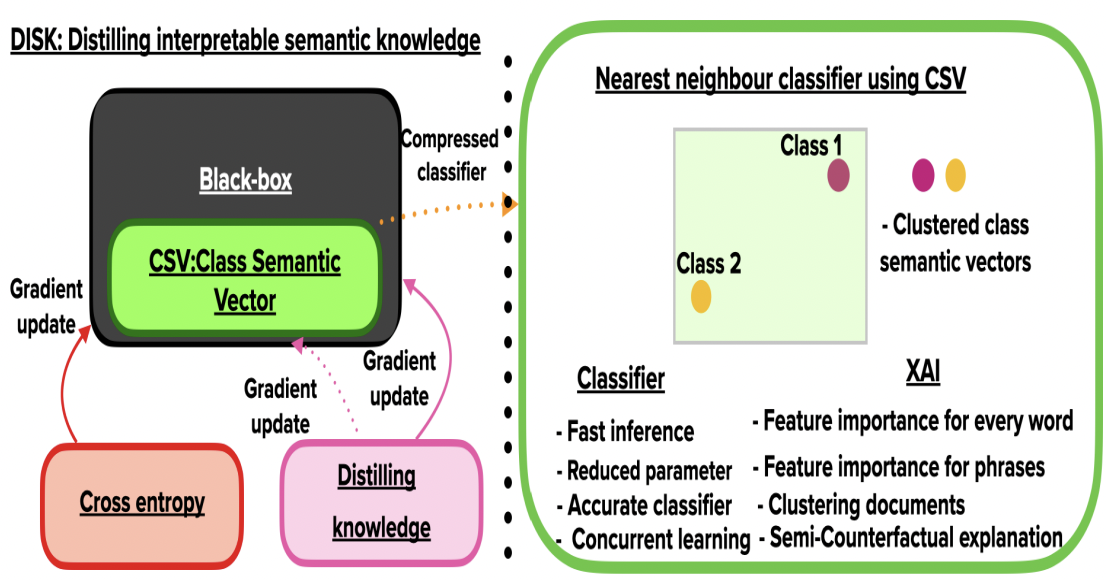

DISK-CSV: Distilling Interpretable Semantic Knowledge with a Class Semantic Vector

A Multi-Component Framework for the Analysis and Design of Explainable Artificial Intelligence

Selected Awards

- Highest performance on task 4 of the COLIEE Competition, Japan, 2018.

- GSA Travel Award, University of Alberta, Canada, 2017.

- Full Doctoral Scholarship: Awarded by the Computing Science Department of the University of Alberta, Canada, 2016.